Making sense of our connected world

AI as a flying blue brain? How metaphors influence our visions of AI

Why is Artificial Intelligence so commonly depicted as a machine with a human brain? The narratives and metaphors of science fiction, media outlets and special interest groups about what is described by the term “Artificial Intelligence” (to make use of a metaphor already) have affected our view of AI systems in society. Metaphors are all around us and they are extremely powerful: they access our memories, trigger emotions, influence our attitude and shape our expectations about the future. This article shows why one misleading metaphor became so prevalent.

Language reflective approaches to thinking about our future with AI

When you type in “AI” in a search engine of your choice you may not be surprised to find that most images depict a human brain in loud blue colours. Though on second thought, this is astonishing: Why would a machine have a human brain? Why would searching for a branch of computer science show depictions of the centre of our nervous system?

Ascribing human characteristics to AI systems is a widespread metaphorical concept in contemporary descriptions of AI. Visual, as well as textual metaphors, AI systems are portrayed as human-like (European Commission 2018), in disregard of actual technological limitations. One can easily think of examples: artificial “intelligence”, machine “vision”, machine “learning” or artificial “neural” networks. All these metaphors are derivatives of the same metaphorical concept: attributing human characteristics to AI systems. (see also this blogpost by C. Djeffal)

By definition, Metaphors are transmissions of meanings, they create a linguistic link between two separate semantic domains (Schmitt et al. 2018). One phenomenon is understood and experienced through the properties of another (Lakoff & Johnson 2003:5).

The role of metaphors has been a subject of extensive research in a variety of different fields – from rhetorics, linguistics, philosophy, political science over cognitive science to future studies. They show that metaphors are more than puns and phrases: Future visions are manifested linguistically, hence language becomes the medium and interface between present constellations and the future (Lösch & Ferrari 2017, Grunwald 2009).

Politics is war! Financial Markets are a force of nature! Data is the new oil!

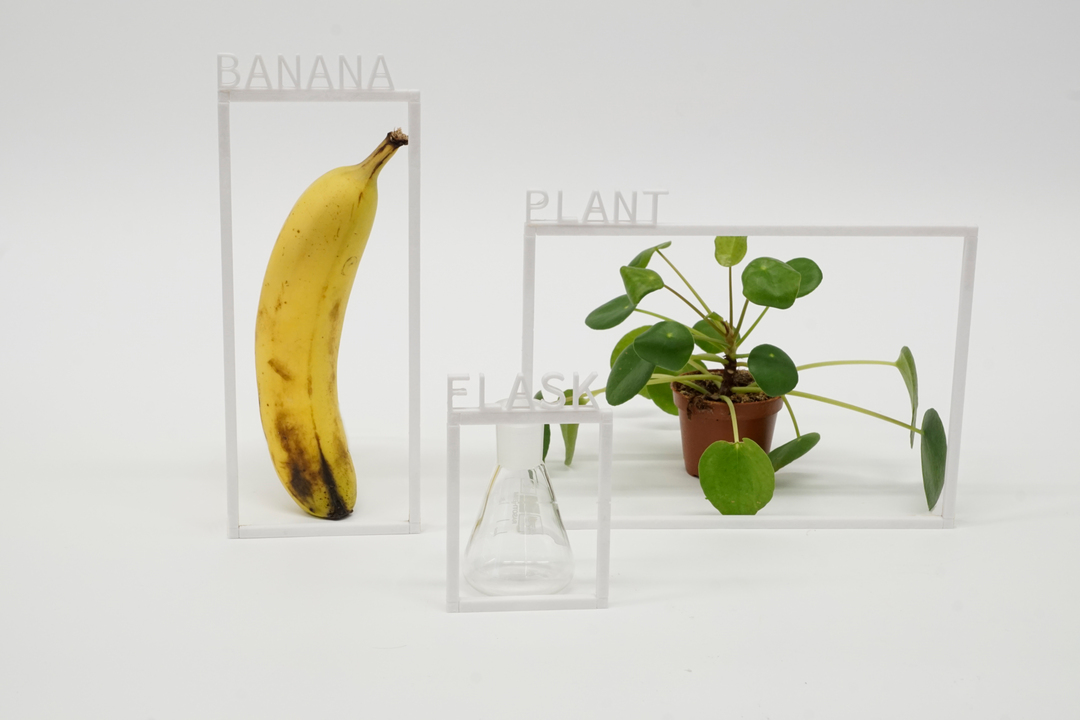

Metaphors are documents of metaphorical concepts; statements of analogues that share common imagery (Schmitt et al. 2018). While we use metaphors to make sense of the world around us, they also help us to understand new technologies and tools. Their impetus is threefold: a metaphor emphasises the novel by referring to something familiar thereby highlighting hidden similarities at the expense of some crucial features being abstracted away (Stark & Hoffmann 2019).

Myths and Metaphors of Recreation: Is there anything human in these machines?

Anthropomorphic metaphors render non-human entities worthy of moral care and consideration. By depicting AI as a human brain, a sense of familiarity and kinship is evoked. The brain metaphor implies that something innately similar to us that shares the brain as the source of the qualities that define us as humans is created (Salles, Evers & Farisco 2020). Alikeness makes the notion of social acceptance salient and propagates the idea that a machine works like a human brain. What lies underneath, is the hope and belief that non-human systems are analogous to human minds and, with adequate training, human intelligence can be technically simulated (Crawford 2021). Interestingly, pictorial metaphors of AI such as the brain-inspired AI metaphor are commonly depicted in blue, green, and purple colors counterbalancing the attributed human resemblance with coldness and distance (Ganesh and Gilman 2020).

The aspiration to recreate a living conscious being has been a prevailing motif in human cultural history: from the tales of golem over Mary Shelley’s Frankenstein to more recent portrayals like the replicants in the movie “Blade Runner” (Hermann 2021). Anthropomorphisms have a longstanding history and the yearning for creation remains a prevalent narrative (Cave et al. 2018).

Mind (brain) is a computer is a mind (brain)

Machines described and depicted as a human brain is a bidirectional metaphor, the source and target domain of the metaphor can be exchanged. Historically, new technologies were compared to the human brain. The simpler, sensuously “known” object was the mind, whose characteristics were used to illustrate the functioning of telegraphs, hydraulic machines or electrical circuits (Cobb 2021). And each time a new technology was introduced, new analogies to human brains were drawn.

Today, the formerly unknown machine became better understood than the human brain (West & Travis 1991). As a result, the mechanical metaphor shifted target and source: the functionalities of computers are nowadays used in neuroscientific research to help illustrate and understand the structure and functioning of our brains (Cobb 2021). Expressions such as “I am feeling wired today” or “Can you do a quick download of your findings? ” have also become commonplace in everyday language.

Metaphors dissolve with ongoing research and the surfacing of dissimilarities

Comparing machines to the human mind, like an AI as a human brain metaphor, provides expressive power and heuristic value, which explains why building on this metaphorical concept has captured the popular imagination and also was cultivated throughout discourse for the past 70 years. As a goal-oriented metaphor, it provided a powerful narrative for policymakers, researchers and engineers alike, yet, with a perceived gap between replicating human intelligence and actual technological advancement, the metaphorical concept may start to lose momentum (see also better images of AI).

New metaphors as a chance to shift our vision on alternative futures

When we think and talk about the future we use concepts of the past to imagine and explain unknown phenomena through metaphors, yet they carry normative implications that shape our thoughts (Wyatt 2021, Katzenbach & Larsson 2017). Metaphors help us navigate the unknown by emphasising certain features. Yet, using language and imagery that is more precise can enlighten aspects of sociotechnical systems that are hidden through metaphors and enrich our understanding of technology and its impact (Johnson & Verdicchio, 2017). Shifting linguistic frames through new metaphors and narratives for AI could help us make alternative future visions plausible and allow needed new visions to emerge.

Download this article as a poster

Sources

Cave, S., Craig, C., Dihal, K., Dillon, S., Montgomery, J., Singer, B. and Taylor, L., (2018). Portrayals and perceptions of AI and why they matter. [online] The Royal Society. Available at: https://royalsociety.org/topics-policy/projects/ai-narratives/ [Accessed 26 March 2022].

Cobb, M., (2022). How technology has inspired neuroscientists to reimagine the brain. [online] Vox. Available at: https://www.vox.com/unexplainable/2021/11/17/22770720/brain-science-technology-neurology-matthew-cobb [Accessed 26 March 2022].

European Commission, (2018). Artificial Intelligence for Europe. COMMUNICATION FROM THE COMMISSION. [online] Brussels: European Commission, p.1. Available at: https://eur-lex.europa.eu/legal-content/EN/TXT/?uri=COM%3A2018%3A237%3AFIN [Accessed 24 March 2022].

Ganesh, M. and Gilman, N., (2020). Making Sense of the Unknown – The Rockefeller Foundation. [online] The Rockefeller Foundation. Available at: https://www.rockefellerfoundation.org/blog/making-sense-of-the-unknown/

Grunwald, A. (2009) Wovon ist die Zukunftsforschung eine Wissenschaft? in: R. Popp und E. Schüll (Hrsg.): Zukunftsforschung und Zukunftsgestaltung, Berlin; Heidelberg: 25-26.

Hermann, I. (2021). Artificial intelligence in fiction: between narratives and metaphors. AI & SOCIETY. https://link.springer.com/10.1007/s00146-021- 01299-6

Johnson, D. G., & Verdicchio, M. (2017). Reframing AI Discourse. Minds and Machines, 27(4), 575–590. https://doi.org/10.1007/s11023-017-9417-6

Katzenbach, C, Larsson, S (2017) Imagining the digital society – metaphors from the past and present. Available at: https://www.hiig.de/en/imagining-the-digital-society-metaphors-from-the-past-and-present/ [Accessed 26 March 2022].

Lakoff, G, Johnson, M (2003) Metaphors We Live by. Chicago, IL: The University of Chicago Press.

Salles, A., Evers, K., & Farisco, M. (2020). Anthropomorphism in AI. AJOB Neuroscience, 11(2), 88–95. https://doi.org/10.1080/21507740.2020.1740350

Schmitt, R., Schröder, J., & Pfaller, L. (2018). Systematische Metaphernanalyse. Springer Fachmedien Wiesbaden. https://doi.org/10.1007/978-3-658-21460-9

Stark, L., & Hoffman. (2019). Data is the new what? Popular metaphors and professional ethics in emerging data cultures. Journal of Cultural Analytics, 1(1), 1-22.

West, D. and Travis, L., (1991). The Computational Metaphor and Artificial Intelligence: A Reflective Examination of a Theoretical Falsework. AI Magazine, 12(1), 64-78.https://doi.org/10.1609/aimag.v12i1.885

Wyatt, S. (2021). Metaphors in critical Internet and digital media studies. New Media & Society, 23(2), 406–416. https://doi.org/10.1177/1461444820929324

This post represents the view of the author and does not necessarily represent the view of the institute itself. For more information about the topics of these articles and associated research projects, please contact info@hiig.de.

You will receive our latest blog articles once a month in a newsletter.

Artificial intelligence and society

Prompt to perform? Sustainable talent management in the age of generative AI

What impact will generative AI have on talent pipelines? How must companies rethink their talent management?

AI between climate change and climate protection

How do climate change and artificial intelligence interact? How AI can help in the fight against climate change?

Digital by design, not by default: Resilience in higher education

What does resilience in higher education look like? A comparison of two models from Germany and Portugal shows: there is no single formula.