Making sense of our connected world

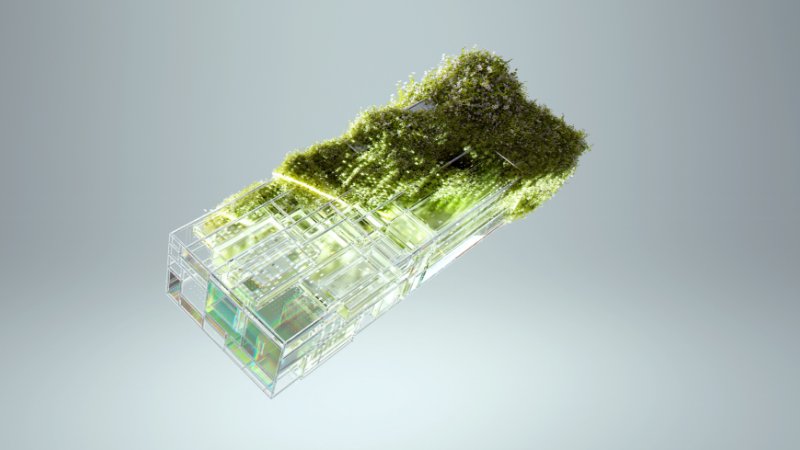

AI between climate change and climate protection

Climate change is one of the greatest challenges of our time. Simultaneously, there is continuing interest in the use and development of artificial intelligence (AI), which requires large amounts of energy and natural resources to operate. This article explains how climate change and AI interact, and how AI can help in the fight against climate change.

To better understand the tension between climate change and AI, we first need to look at what is behind AI – beyond programming code and mathematics. Consider the following example: we want to ask an AI bot how we can combat climate change in our daily lives. First, we need a device through which we can access the bot. Then, we enter a suitable prompt into the bot. For example: “What three things can I do in my daily life to combat climate change?” This query is sent via various networks to the bot’s server, where the underlying AI model processes it, generates a response, and sends the response back to the bot.

Generating a response requires a great deal of energy and natural resources. This begins with the device used to access the bot and encompasses the infrastructure through which the request is sent to the AI bot’s server. Last but not least, we must also take into account the servers on which the AI bot and the model are hosted. Servers play a central role in this process, storing not only the AI model but also the data required to train it. In addition, they provide the computing power needed when the model learns (training) and when it is queried (inference).

Energy consumption, emissions and water usage of large language models

In order to answer our question about combating climate change, we require a large language model that has been trained for such tasks – to answer questions in text form. While such models are currently in the public spotlight, they represent only a small fraction of all possible AI models and applications. Examples of large language models include the GPT models, Claude, Gemini or BLOOM.

To train our model, large amounts of data (in this case: text) had to be collected and prepared for training. During the learning process, the model relies on probabilities. More precisely, it does not understand the specific content of the texts, but rather learns to associate words with one another. For example: The phrase “To do something against climate change, I can…” is more often followed by “…cycle to work” than”…go on a long-distance trip”. Therefore, if an AI model is trained using lots of texts about combating climate change, it recognises such relations within the texts and can reproduce them when prompted. However, errors can occur in this process. The generation of wrong information by large language models (“hallucinating”) is thereby particularly problematic.

What about the energy consumption and emissions associated with training and inference for such AI models? According to a 2023 study, training the BLOOM AI model used 433 MWh of electricity. For comparison, the net electricity consumption of all German households in 2024 was 133 TWh, or around 1,591 MWh per person (2024 population). Therefore, training BLOOM consumed roughly the same amount of energy as the amount of energy used by around 272 people in Germany in 2024. The emissions associated with training BLOOM were estimated at around 25 tonnes of CO₂ equivalents.

The same study estimates that BLOOM consumes around 0.004 kWh of energy per query (914 kWh for 230,768 queries). Energy consumption per query is therefore eight orders of magnitude lower than that of the training phase – or, to put it another way: around a hundred million times lower. But: the more people use the model, the higher the energy consumption. Popular models such as ChatGPT receive around 18 billion queries per week, making them a substantial driver of energy consumption.

But what exactly is BLOOM, and what makes this language model so special? BLOOM was developed by over 1,000 researchers as part of an initiative to create a transparent, open-source, and multilingual model. This is also why we have a good understanding of BLOOM’s energy consumption and emissions – opposed to non-public models such as GPT-3 for which we need to rely upon estimations. When calculating emissions from electricity consumption, spatial and temporal factors additionally play a role. This is because these emissions depend on the electricity mix used, which varies according to server location as well as the time of day and the season.

In addition to this, there is the water consumption associated with cooling the servers and generating electricity for them. For instance, querying GPT-3 in the US uses around 17 ml of water on average, whereas querying it in Ireland uses around 7 ml. Here, too, both spatial and temporal factors play a role. Therefore, outside temperature has an important impact on cooling; in warmer regions, in summer and during the day (when the sun is shining), servers require more intensive cooling.

Therefore, the timing of the training and inference phases, as well as the location of the server, are crucial for the consumption and hence the reduction of emissions and water consumption. While more solar power and thus lower-emission electricity can be generated during the day, higher outdoor temperatures necessitate more intensive cooling of the servers, which increases water consumption. Similarly, water consumption can increase when servers are powered by low-emission hydropower. The optimal time for server use and the optimal location of servers must therefore always be considered in light of the various consumption factors.

AI applications for climate protection

What about the positive impact of AI applications on the environment? Let’s start by examining language models such as GPT-3. Such models could potentially make an indirect positive contribution, for example, by supporting environmental education – for example, by correctly answering to the question “What three things can I do in my daily life to combat climate change?” Another example are language barriers, which can be reduced through the use of AI models, thereby enabling new groups to participate in debates on climate change. However, accurately assessing the positive impacts of such models is difficult and subject of current research.

The specific positive environmental impacts of AI applications are easier to assess for smaller models tailored to specific applications. For instance, AI can optimise the control of industrial cooling systems, directly positively impacting resource and energy consumption. AI-supported monitoring of peatlands can also positively contribute to environmental conservation: as peatlands store large quantities of CO₂, their protection is essential to combat climate change. Another example of the use of AI is in the transportation, particularly in demand modelling and infrastructure planning. Such modelling can directly impact the length of transport routes and the choice of means of transport. This, in turn, is directly linked to emissions. For example, longer distances generally result in higher emissions, and air travel is connected with higher emissions than rail travel. Such AI models are usually much smaller than large language models and therefore consume fewer resources, making them particularly attractive in the context of AI and climate change.

Overall assessment: It’s difficult

It is difficult to provide an overall assessment of the environmental impact of an AI application. First of all, the lack of data and standards poses a major challenge, particularly when it comes to the consumptions of proprietary AI models such as GPT-3. Without this data, it is only possible to make estimates of these consumptions, and without standards, even published data is difficult to compare. This leads to the next challenge: determining the energy consumption of AI is often highly complex. Large AI models are embedded in various digital applications and require a wide range of digital infrastructure. In addition, there is the manufacturing of the hardware. All these complexities must be taken into account for an overall assessment. Furthermore: even if an AI application has a positive overall impact, it can ultimately lead to increased consumption. The so-called Jevons paradox or rebound effect states that savings generated by efficiency can lead to an overall increase in consumption due to the same system being used more frequently (see Source 1 and Source 2).

To address these challenges, therefore, we need more than only additional research onAI and the environment. Legislation is also required: there must be mandatory transparency requirements for reporting of environmental data by large AI companies, and specific targets. There must also be mechanisms in place not only to report violations of these requirements but also to hold companies accountable.

Such measures for improved transparency and accountability are not just a matter of environmental justice. Because those who are already part of a disadvantaged group often suffer the most from the impact of climate change. Therefore, solutions to climate change are complex and must always be considered alongside issues of social justice.

This article was first published in German on 10 February 2026 by the Zentrum Liberale Moderne.

References

Bender E. M., Gebru T., McMillan-Major A. & Shmitchell S. (2021) On the Dangers of Stochastic Parrots: Can Language Models Be Too Big?. ACM DL Digital Library. https://dl.acm.org/doi/10.1145/3442188.3445922

BLOOM (2022). BigScience Large Open-science Open-access Multilingual Language Model (Version 1.3). Hugging Face. https://huggingface.co/bigscience/bloom

Bölling N. (2025). 18 Milliarden Anfragen pro Woche: Das machen Menschen wirklich mit ChatGPT. t3n digital pioneers. https://t3n.de/news/18-milliarden-anfragen-pro-woche-das-machen-menschen-mit-chatgpt-1707588/

Ebert K., Alder N., Herbrich R. & Hacker P. (2025). AI Climate, and Regulation: From Data Centers to the AI Act. arXiv. https://arxiv.org/abs/2410.06681

Kaack L. H., Donti P. L., Strubell E., Kamiyam G., Creutzig F. & Rolnick D. (2022) Aligning artificial intelligence with climate change mitigation. nature climate change. https://www.nature.com/articles/s41558-022-01377-7

Li P., Yang J., Islam M. A. & Ren S. (2025). Making AI Less “Thirsty”: Uncovering and Addressing the Secret Water Footprint of AI Models. arXiv. https://arxiv.org/abs/2304.03271

Luccioni, A. S., Viguier S., & Ligozat A.-L. (2022). Estimating the Carbon Footprint of BLOOM, a 176B Parameter Language Model. arXiv. https://arxiv.org/abs/2211.02001

Luccioni A. S., Strubell E. & Crawford K. (2025). From Efficiency Gains to Rebound Effects: The Problem of Jevons’ Paradox in AI’s Polarized Environmental Debate. arXiv. https://arxiv.org/abs/2501.16548v1

Rillig M. C., Ågerstrand M., Bi M., Gould K. A. & Sauerland U. (2023). Risks and Benefits of Large Language Models for the Environment. ACS Publications. American Chemical Society. https://pubs.acs.org/doi/10.1021/acs.est.3c01106

Rolnick D., Donti P. L., Kaack L. H., Kochanski K., Lacoste A., Sankaran K., Ross A. S., Milojevic-Dupont N., Jaques N., Waldman-Brown A., Luccioni A. S., Maharaj T., Sherwin E. D., Mukkavilli S K., Körding K. P., Gomes C. P, Ng A., Hassabis D., Platt J. C, Creutzig F., Chayes J. & Bengio Y. Q (2022). Tackling Climate Change with Machine Learning. ACM DL Digital Library. https://dl.acm.org/doi/10.1145/3485128

Solaiman I., Talat Z., Agnew W., Ahmad L., Baker D., Blodgett S. L., Chen C., Daumé III H., Dodge J., Duan I., Evans E., Friedrich F., Ghosh A., Gohar U., Hooker S., Jernite Y., Kalluri R., Lusoli A., Leidinger A., Lin M., Lin X., Luccioni S., Mickel J., Mitchell M., Newman J., Ovalle A., Png A.-M., Singh S., Strait A., Struppek L. & Subramonian A. (2024). Evaluating the Social Impact of Generative AI Systems in Systems and Society. arXiv. https://arxiv.org/abs/2306.05949

Statistisches Bundesamt (Destatis) (2024). Bevölkerung: Deutschland, Stichtag. https://www-genesis.destatis.de/datenbank/online/statistic/12411/table/12411-0001

Umweltbundesamt (2025). Energieverbrauch privater Haushalte. https://www.umweltbundesamt.de/daten/private-haushalte-konsum/wohnen/energieverbrauch-privater-haushalte#stromverbrauch-mit-einem-anteil-von-rund-einem-funftel

Xiao T., Nerini F. F., Matthews H. D., Tavoni M. & You F. (2025). Environmental impact and net-zero pathways for sustainable artificial intelligence servers in the USA. nature sustainability. https://www.nature.com/articles/s41893-025-01681-y

This post represents the view of the author and does not necessarily represent the view of the institute itself. For more information about the topics of these articles and associated research projects, please contact info@hiig.de.

You will receive our latest blog articles once a month in a newsletter.

Digitalisation and sustainability

Artificial intelligence and society

Digital by design, not by default: Resilience in higher education

What does resilience in higher education look like? A comparison of two models from Germany and Portugal shows: there is no single formula.

Algorithms under scrunity: AI observatories as democratic infrastructure

Algorithms have a profound impact on people's lives. This article explores why AI observatories are essential for democratic governance.

Forget the “killing machine”: why AI is a question of responsibility, not apocalypse

The authors challenge the metaphor of artificial intelligence as a "killing machine" that will one day surpass its human creators.